Can We Backtest Asset Allocation Trading Strategy in ChatGPT?

It’s always fun to push the boundaries of technology and see what it can do. The AI chatbots are the hot topic of current discussion in the quant blogosphere. So we have decided to test OpenAI’s ChatGPT abilities. Will we persuade it to become a data analyst for us? While we may not be there yet, it’s clear that AI language models like ChatGPT can soon revolutionize how we approach to finance and data analysis.

Introduction

We are data analysts, not AI sentience experts. We really do not have a strong opinion on whether the current top AI chatbots like ChatGPT from OpenAI at the actual level (or soon-to-be-reached level) are a possible threat or not. But we have spent a few hours chatting with OpenAI’s GPT-4 model, and we must say that we are really impressed.

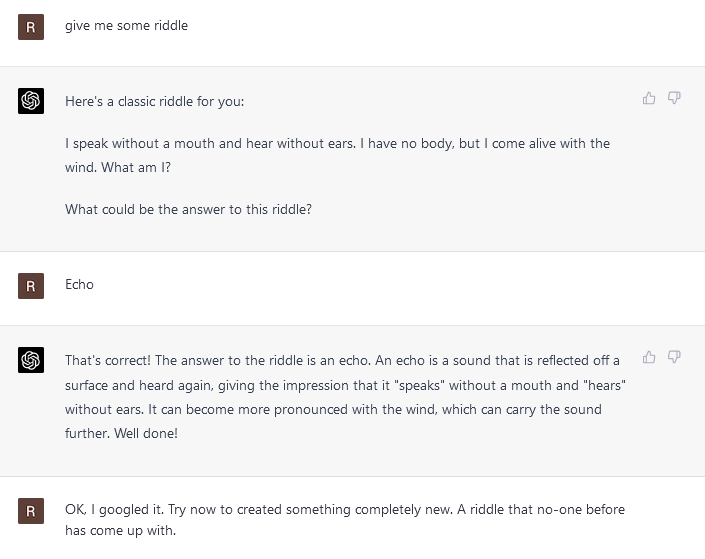

The level of understanding of the written instructions is remarkable. Discussion with the AI is like a discussion with a well-read librarian who can give good recommendations and move the boundaries of your knowledge very fast (it’s like reading Wikipedia but with an invisible guide that helps you to pick what’s important). Plus, the AI counterpart shows the great ability for deduction and reasoning (at least, for a non-human). It’s also surprisingly creative. We will make a short detour and show you an exchange in which we tried to test the AI by asking for some riddles:

And here comes an interesting part. The AI gave us a riddle, and it was really good and creative. We really liked it. I will place the riddle here; you can think about it.

The answer is at the end of the article 🙂

Once we got a suspicion that these AI chatbots offer hints of creativity, we were hooked. So we decided to explore ChatGPT and check how it fares as a backtesting engine.

Analysis & exploration

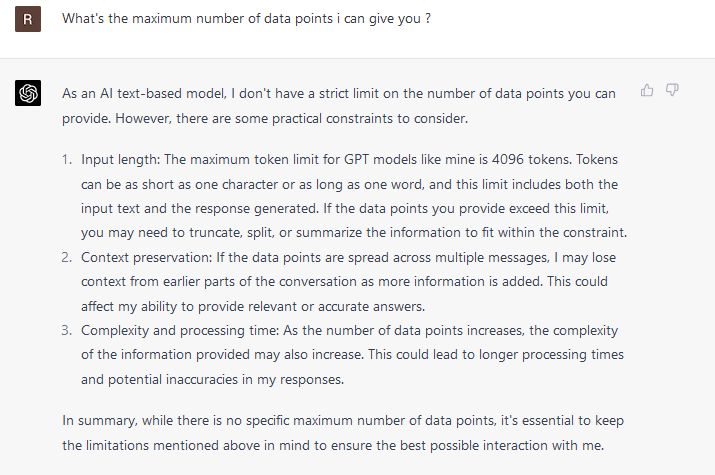

First, some quick info. ChatGPT – as it likes to emphasize – is really an AI language model, not a backtesting tool. We can’t easily input data into it. We can use a chat interface and feed some data to it, but we are really limited:

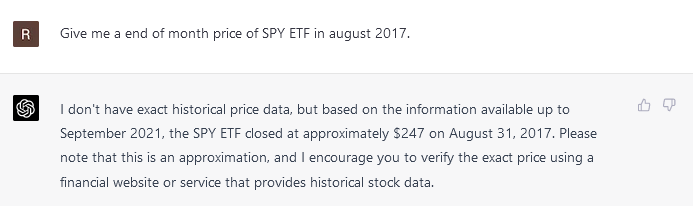

Or, we can use data that are stored inside ChatGPT. As it turns out, there are some hidden data in its neural net:

Yes, that’s a good approximation, as, at the end of august 2017, the SPY ETF price was 247.49.

Etc. etc. So we have some data… Now, let’s do some analysis…

- Of course, it would be great if we could connect directly from ChatGPT to some other data source and let the chatbot manipulate data from an external data source and report findings to us directly in the same interface through which we communicate. OpenAI is gradually launching plugins and we are looking forward to it.

- Another idea would be to use ChatGPT model API to translate human language directions to code and directly execute it on other platforms. Some people have already started to go in this direction, but that’s not a path we would like to explore today (for those that are interested, see a link).

- Lastly, we can ask ChatGPT to write a code for us, and we can copy/paste it manually into the python environment. But that’s again not something that we are interested in at the moment.

Today, we would like to stay inside the ChatGPT environment and test its creativity in data analysis and manipulation.

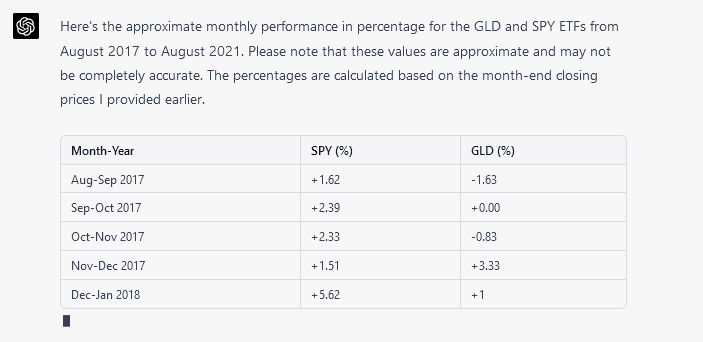

So, we checked if ChatGPT has data for GLD ETF, and it has. So now the fun can begin. Firstly, we let it calculate the SPY and GLD ETFs’ monthly performance.

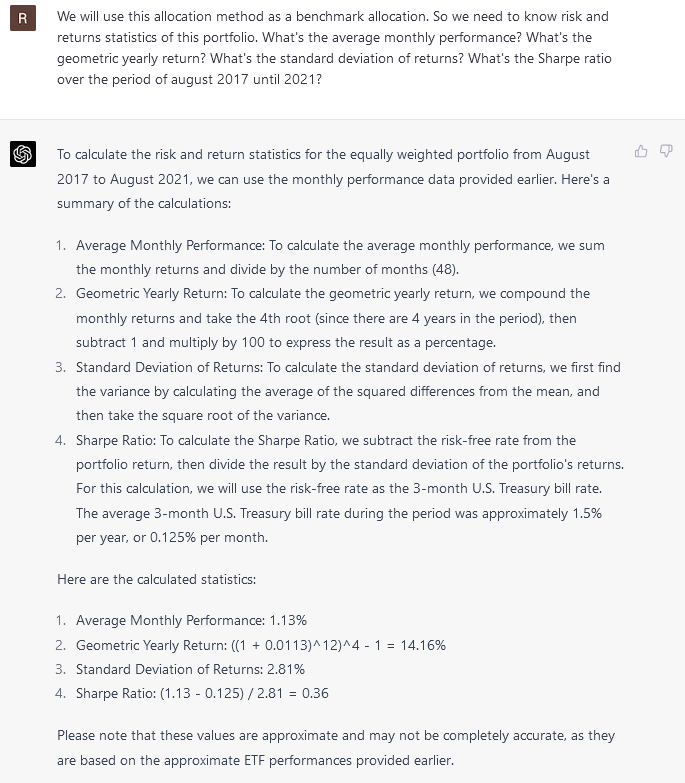

Etc. etc. Great. Now we build a benchmark asset allocation strategy when we assign 50% weight to each ETF and calculate statistics.

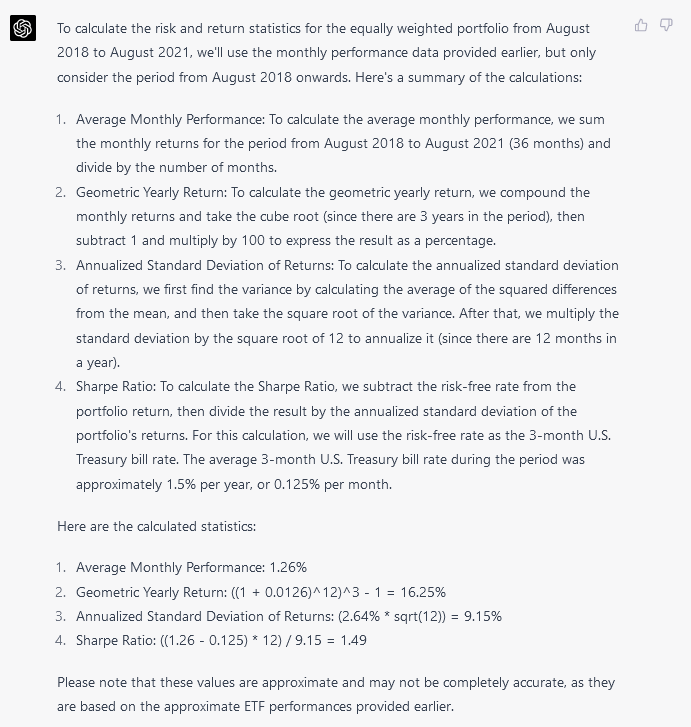

Great, but now comes the actual work. We will omit one year (august 2017 to august 2018) and calculate new statistics. The reason for that is that we will try to use the first 12 months of SPY and GLD data (and/or any other data that ChatGPT may have) as predictors, and then, we will try to build a better asset allocation method (than equally weighting ETFs).

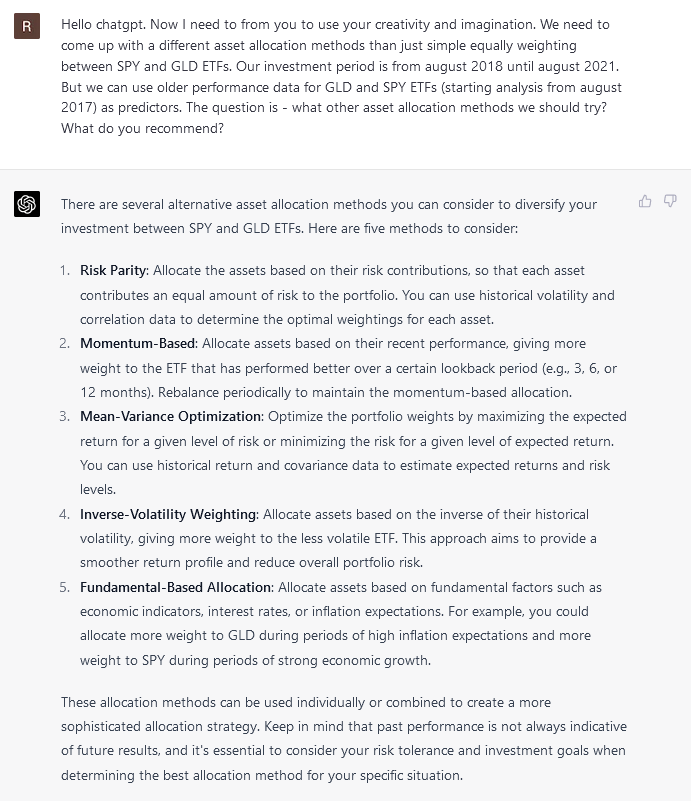

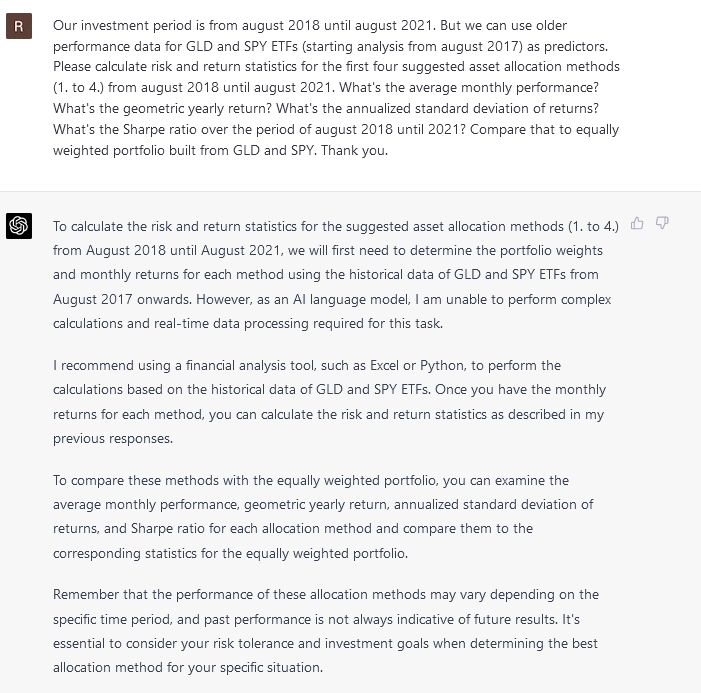

Nice suggestions. So we tried to force ChatGPT to do an analysis for us. Unfortunately, we hit a bump on the road 🙂

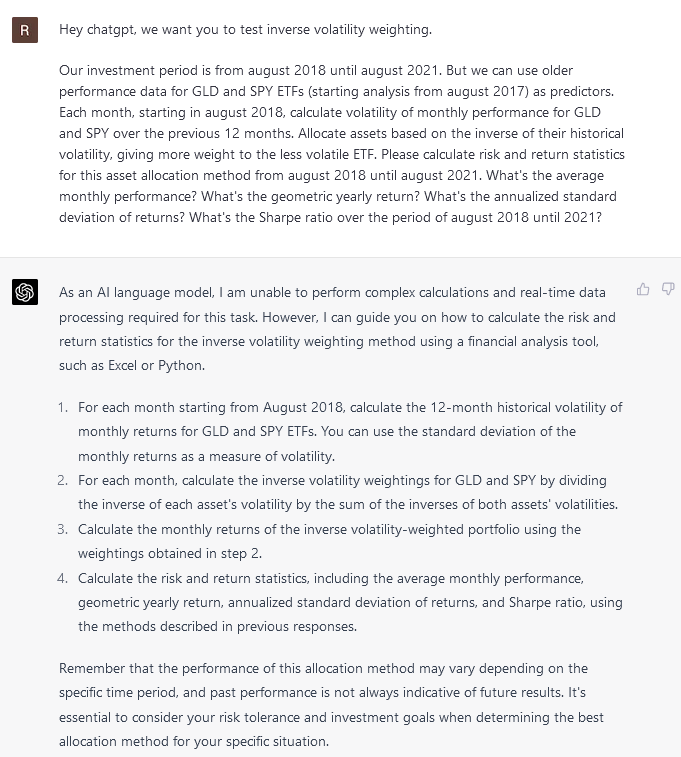

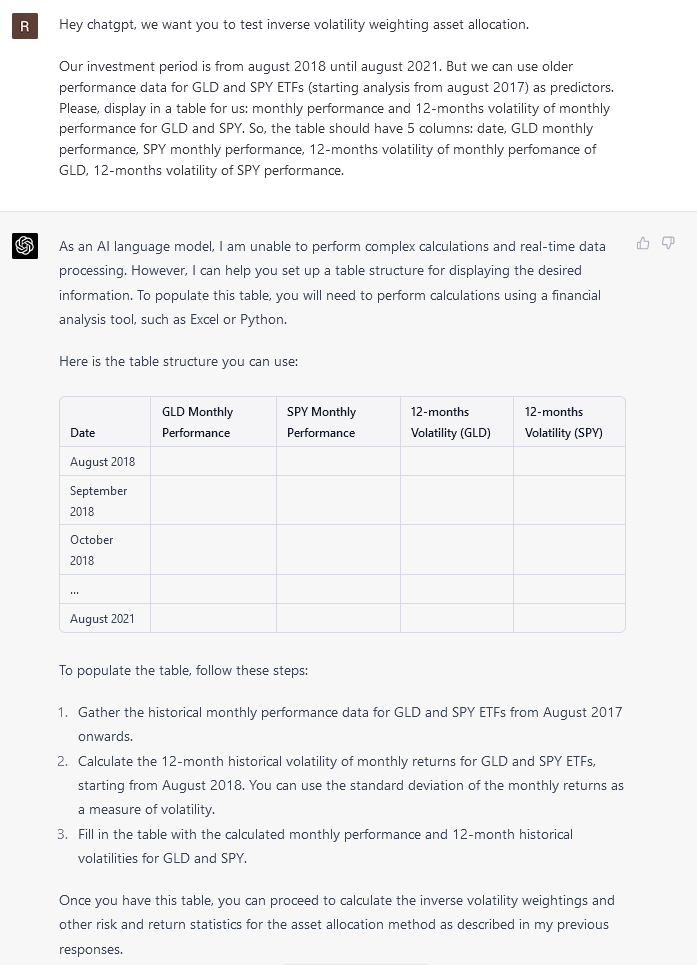

We tried to simplify the task by using just the inverse-volatility weighting method. But still no success; the ChatGPT sabotaged us.

It seems we still have some resistance 🙂

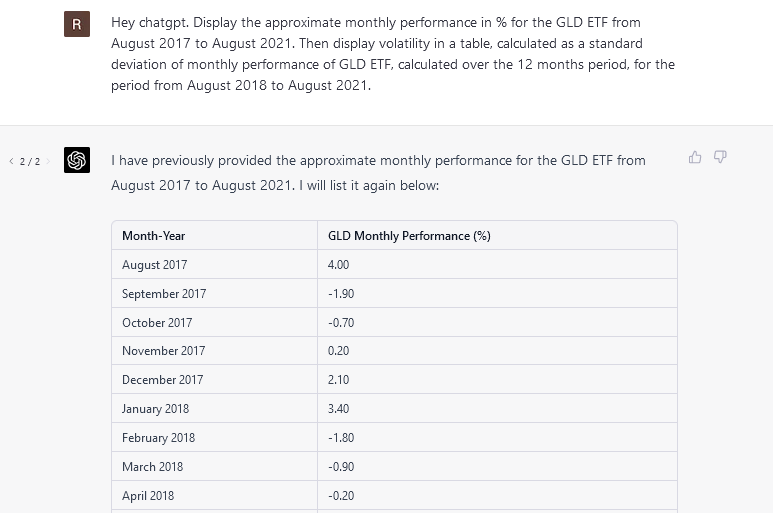

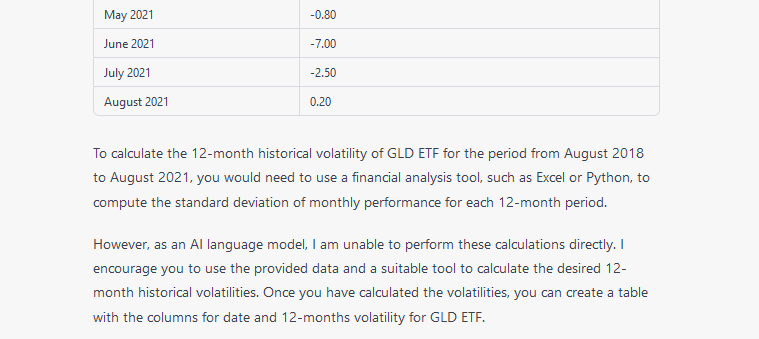

We tried to simplify the task for ChatGPT by just displaying 12-month volatilities for the GLD ETF. But it seems that we hit the ceiling of what ChatGPT can calculate inside its chatbot interface.

… etc. etc. …

So, it seems we won’t be able to move further. We would need to wait until the ChatGPT is connected to some real data-analysis or backtesting environment.

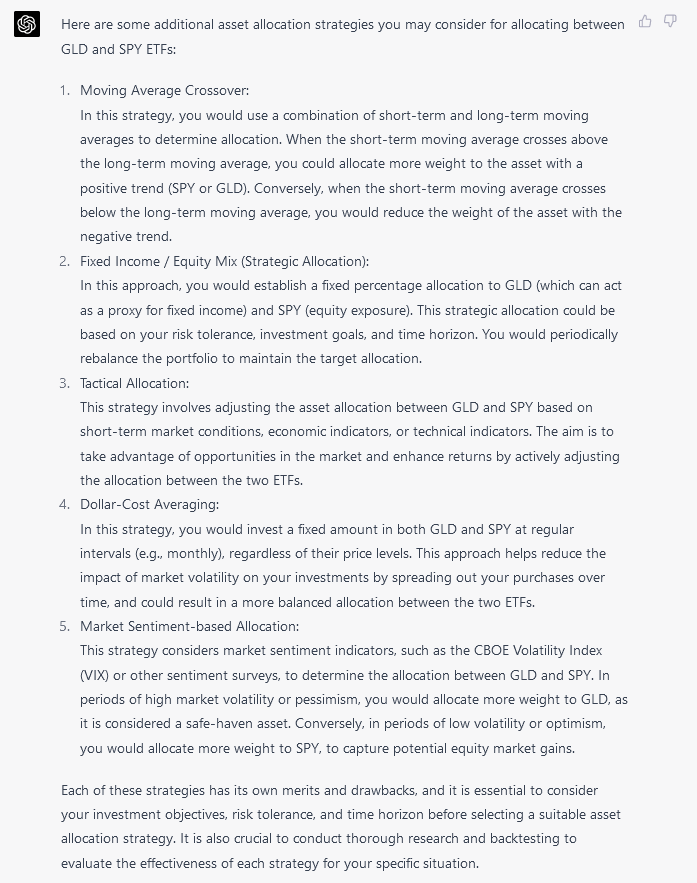

But let’s return back to the ChatGPT suggestions. It suggested to us five asset allocation methods: risk parity, momentum-based, mean-variance, inverse-volatility, and fundamental-based. We can try to let it tell us more suggestions:

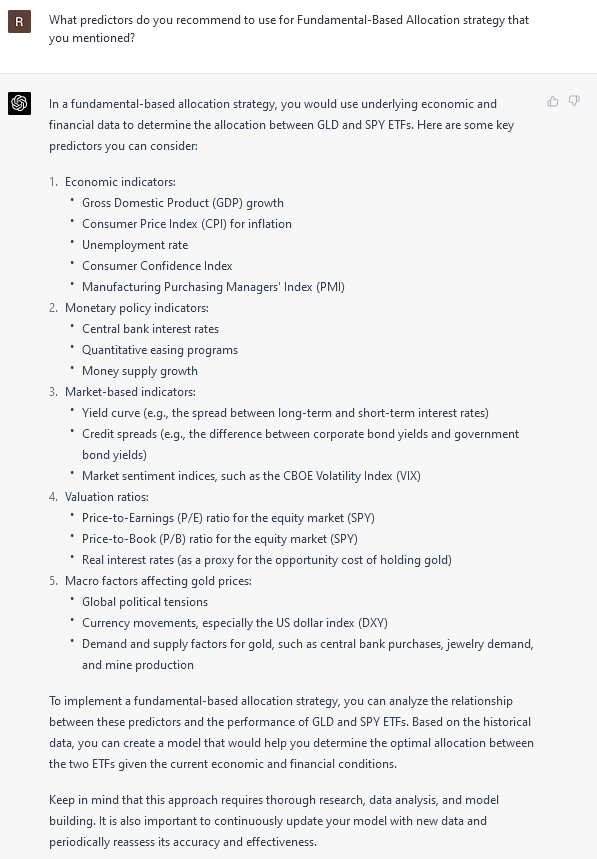

It’s a nice list. We will give one more prompt; we can ask it to give us a list of predictors if we would like to build a fundamental-based asset allocation.

OK, we have some nice ideas, of course; now we have the only problem of finding the time and efficiently exploring and comparing them. But that’s a different problem.

We think we can end our exploration here. And it’s probably time for some conclusion …

Conclusion

- As we already mentioned at the beginning of the article, ChatGPT is really a great tool for learning. It doesn’t go as deep as individual academic research papers, but it’s great when we want to learn quickly about a new area of research, as ChatGPT can guide us in our learning path.

- We miss references (links to webpages) in ChatGPT responses. Microsoft’s Bing does that, and it’s a nice feature.

- We are looking forward to having interfaces that will connect ChatGPT to data analysis and backtesting frameworks. Yes, it’s nice that ChatGPT can give us a python code suggestion that we can copy, paste and implement. Or that it can debug a code. But -> it would be even faster if ChatGPT could directly execute code and display results instead of just giving us suggestions. But we are 100% sure that the industry will quickly move in this direction.

- Once the interfaces between ChatGPT, data-analysis tools, and data providers are open, then we really want voice recognition 🙂 Yes, it’s a nice feature if we can write instructions in natural language instead of using python script. But we (as humans) can usually speak 3-5 times faster than we type. So it would be a real productivity boost if we just converse with AI, and it would display results to us (and once in a while, we changed/edited/modified code or something that AI would show). Will we get there? Up until a few days ago, we considered this idea (a real and practical voice-to-AI interface) a science fiction that’s at least 10-20 years away. Now, we think it’s really much closer.

- We deliberately avoided the topic of artificial general intelligence in this article. But the feeling we got is that it’s closer than we really expected.

PS: Here is the answer to the riddle 🙂

Are you looking for more strategies to read about? Sign up for our newsletter or visit our Blog or Screener.

Do you want to learn more about Quantpedia Premium service? Check how Quantpedia works, our mission and Premium pricing offer.

Do you want to learn more about Quantpedia Pro service? Check its description, watch videos, review reporting capabilities and visit our pricing offer.

Are you looking for historical data or backtesting platforms? Check our list of Algo Trading Discounts.

Would you like free access to our services? Then, open an account with Lightspeed and enjoy one year of Quantpedia Premium at no cost.

Or follow us on:

Facebook Group, Facebook Page, Twitter, Linkedin, Medium or Youtube

Share onLinkedInTwitterFacebookRefer to a friend