Can We Explain Abudance of Equity Factors Just by Data Mining? Surely Not.

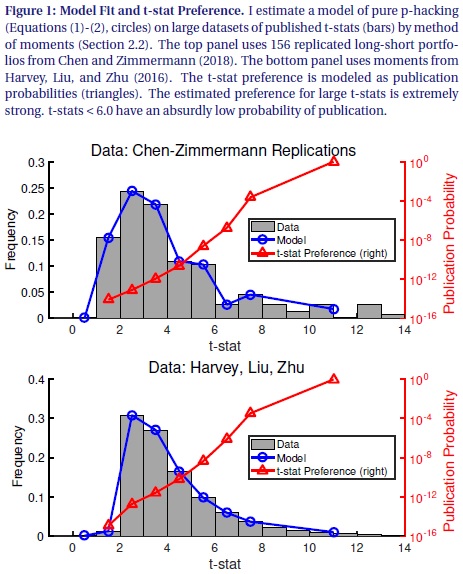

Academic research has documented several hundreds of factors that explain expected stock returns. Now, question is: Are all this factors product of data mining? Recent paper by Andrew Chen runs a numerical simulation that shows that it is implausible, that abudance of equity factors can be explained solely by p-hacking … Author: Chen Title: The Limits of P-Hacking: A Thought Experiment Link: https://papers.ssrn.com/sol3/papers.cfm?abstract_id=3358905 Abstract: Suppose that asset pricing factors are just p-hacked noise. How much p-hacking is required to produce the 300 factors documented by academics? I show that, if 10,000 academics generate 1 factor every minute, it takes 15 million years of p-hacking. This absurd conclusion comes from applying the p-hacking theory to published data. To fit the fat right tail of published t-stats, the p-hacking theory requires that the probability of publishing t-stats < 6.0 is infinitesimal. Thus it takes a ridiculous amount of p-hacking to publish a single t-stat. These results show that p-hacking alone cannot explain the factor zoo. Notable quotations from the academic research paper: “Academics have documented more than 300 factors that explain expected stock returns. This enormous set of factors begs for an economic explanation, yet there is little consensus on their origin. A p-hacking (a.k.a. data snooping, data-mining) offers a neat and plausible solution. This cynical explanation begins by noting that the cross-sectional literature uses statistical tests that are only valid under the assumptions of classical single hypothesis testing. These assumptions are clearly violated in practice, as each published factor is drawn from multiple unpublished tests. In this well-known explanation, the factor zoo consists of factors that performed well by pure chance. In this short paper, I follow the p-hacking explanation to its logical conclusion. To rigorously pursue the p-hacking theory, I write down a statistical model in which factors have no explanatory power, but published t-stats are large because the probability of publishing a t-stat ti follows an increasing function p(ti). I estimate p(ti ) by fitting the model to the distribution of published t-stats inHarvey, Liu, and Zhu (2016) and Chen and Zimmermann (2018). The p-hacking story is powerful: The model fits either dataset very well.

| Do you want to test these ideas yourself? We offer our readers Historical Trading Data Discounts. |

Are you looking for more strategies to read about? Sign up for our newsletter or visit our Blog or Screener.

Do you want to learn more about Quantpedia Premium service? Check how Quantpedia works, our mission and Premium pricing offer.

Do you want to learn more about Quantpedia Pro service? Check its description, watch videos, review reporting capabilities and visit our pricing offer.

Do you want algorithmic access to the full Quantpedia database via the API? Subscribe to Quantpedia Pro, ask for an API key, and explore the in/out-of-sample statistics, source academic papers, and code snippets — ideal for quantitative research, systematic trading workflows, and AI model training.

Are you looking for historical data or backtesting platforms? Check our list of Algo Trading Discounts.

Or follow us on:

Facebook Group, Facebook Page, Twitter, Linkedin, Medium or Youtube

Share onLinkedInTwitterFacebookRefer to a friend